Build Trust in Users by Designing with Their Privacy in Mind

A growing number of users are moving from Facebook, a platform that emphasizes broadcasting and amplified sharing, to Instagram, a platform where sharing is more intimate and controlled. There’s a feeling of privacy and control in Instagram, which is owned by Facebook, because of its simplicity. Whether the shift in popularity between the two platforms is because of underlying worries about privacy, or an attraction to a simpler form of media consumption, people are becoming more aware of their digital footprint. There are people that have expressed concern for their privacy but don’t behave accordingly, which creates a privacy paradox. I am definitely more cautious about how any platform or digital service treats my data. The first thing I do when signing up for any new platform is check what happens with my information and deny access to the information I don’t see a direct need for. As a designer, I aim to create designs that respect user’s need for privacy. Here’s what I try to keep in mind while designing.

Why does privacy matter?

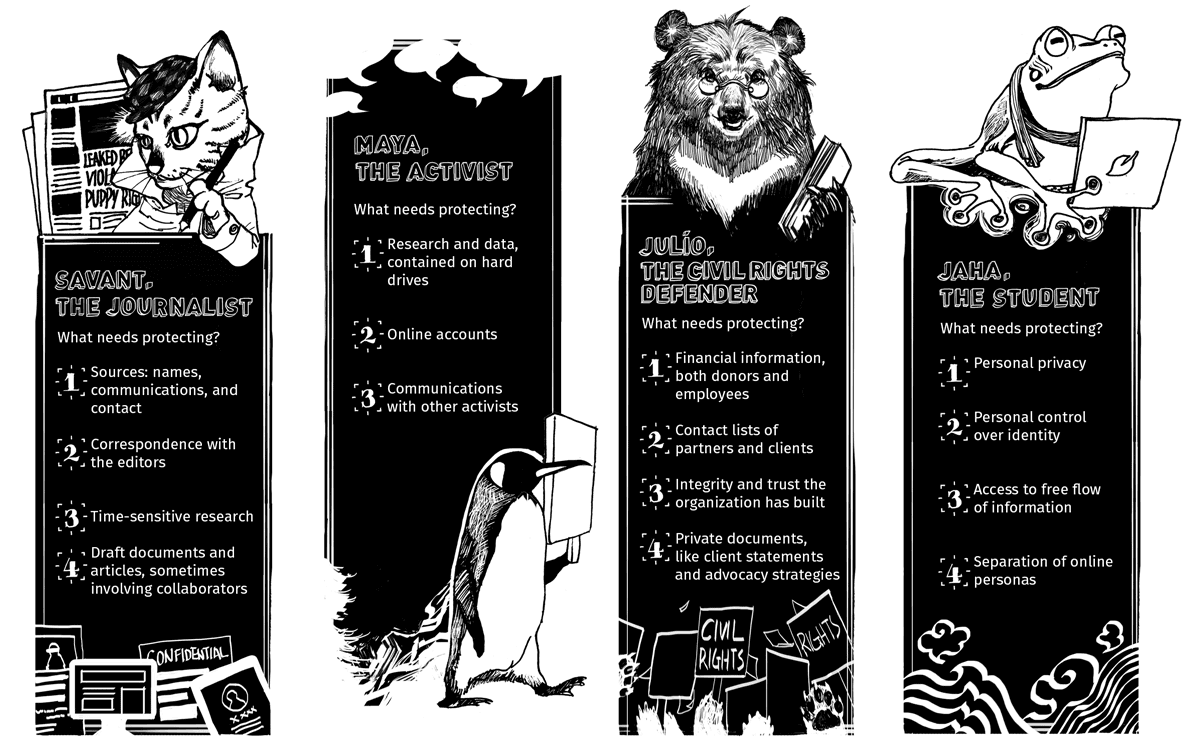

I used to think digital privacy wasn’t a big deal because I had nothing to hide. After having information compromised because of large scale data breaches and being served ads that were eerily on the nose, I realized this attitude just couldn’t fly anymore. While it is partly my responsibility as the user to ensure my online safety, digital platforms should provide tools and strategies to help users protect themselves. Additionally, some people’s data puts them more at risk than others — for example, journalists, activists, human rights defenders and individuals from marginalized communities. Here are four ways we design more trustworthy experiences for users at ASL19:

Determine risk levels

Personas with varying risk levels and security needs — Access Now

Everyone wants to feel like their safety is in the best interest of the company or product they invest in. Some have more immediate concerns surrounding privacy than others. These groups must pay special attention to their digital safety and the separation of their online identity. This guide is a great starting point for identifying user risk levels. It’s important to determine the risks users may be facing online and what actions can alleviate these worries. Some important questions to ask are: what’s at stake for my users? How can I make this a more trustworthy experience for them?

Be transparent

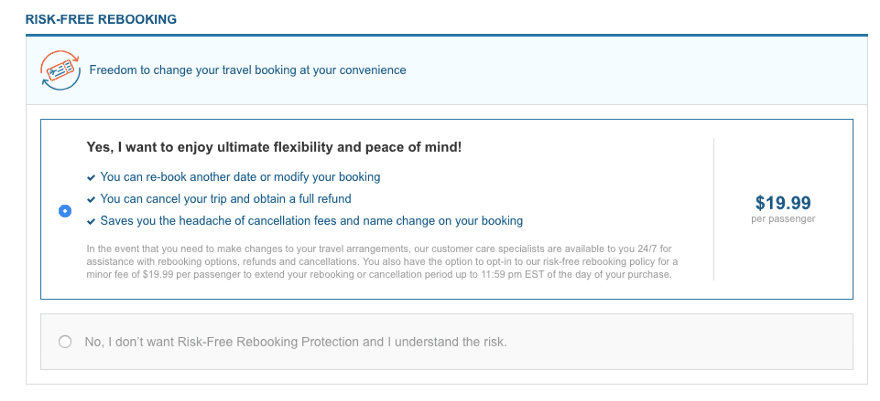

I don’t think anybody actually enjoys the process of booking a flight or accommodation online. Many of these aggregate sites use deceptive UI to fool people into making quick, uninformed decisions. Some try to sneak in fees and use guilt-inducing copy that creates a negative experience. This type of design is what many designers like to call dark patterns. A dark web pattern is a design made to trick users, and deliberately confuse them to get a desired outcome from them.

This option is selected by default, the alternative choice is less visible and uses copy that is intended to create anxiety in the user

Users already have a pretty heavy cognitive load when trying to complete basic actions without having to dodge tricky options and hunt for important information. This is why I really appreciate when a website or platform tells me up front why they need my email address, or permission to view my phone calls. Users need to be told what information is necessary to complete a process and how that information is used or shared. Additionally, anything decided for the user that isn’t in their own interest is invasive. Remember, when the iPhone 6 automatically downloaded a U2 album without people’s permission? Don’t do that.

Make everything predictable

Many of the websites and apps being built in ASL19 are designed for at risk users. Their desired end goal can involve a process that exhausts them or creates anxiety. For example, individuals belonging to a minority group might want to have a discussion on policy changes, but be nervous about exposing themselves in any way. If the process is predictable this can reduce the friction the user is already experiencing. This means providing clear explanations of what happens when a user agrees to do something, or provides information. Having a better understanding of the digital conventions the user is used to is another way to make the experience more predictable for the user. Of course this doesn’t mean the experience has to be void of delight, it just has to do what people are expecting it to do.

Give users control

Many of ASL19’s projects facilitate user dialogue and provide users access to information they typically cannot find. This sets the user’s baseline risk level higher than the average internet user. This is why the separation of online persona and personal identity is so crucial for our users. Anonymity is after all, one of the greatest tenets of the internet.

Take a look at how Facebook used to handle privacy settings. It was a confusing, annoying labyrinth of menus and it felt like no matter what you did something still slipped through the cracks. As worries of oversharing and data collection grew, privacy groups and users demanded more control over their Facebook accounts and profiles. Thankfully, the process has been streamlined to be more simple, but the damage to Facebook’s credibility is already done.

In a digital age where data is a valuable resource, it’s important for users to be able to fine-tune access to their data. Users should also have control of their own data storage and how to destroy their data. This information is often buried in de-emphasized, difficult to parse privacy policies. Giving users access to their own information and control over it builds a healthier relationship with the platform.

Currently, the responsibility of digital security and privacy protection is dependant on an individual’s cyber literacy. This leaves many less technically savvy users vulnerable. Keeping these basic pillars in mind when designing a product builds a relationship of trust with the user and supports safer online interaction.