A War of Narratives Powered by AI

Much like during the first 72 hours of the war, AI-generated content has played a major role in shaping the online narrative, though its use has not necessarily been limited to one side of the conflict.

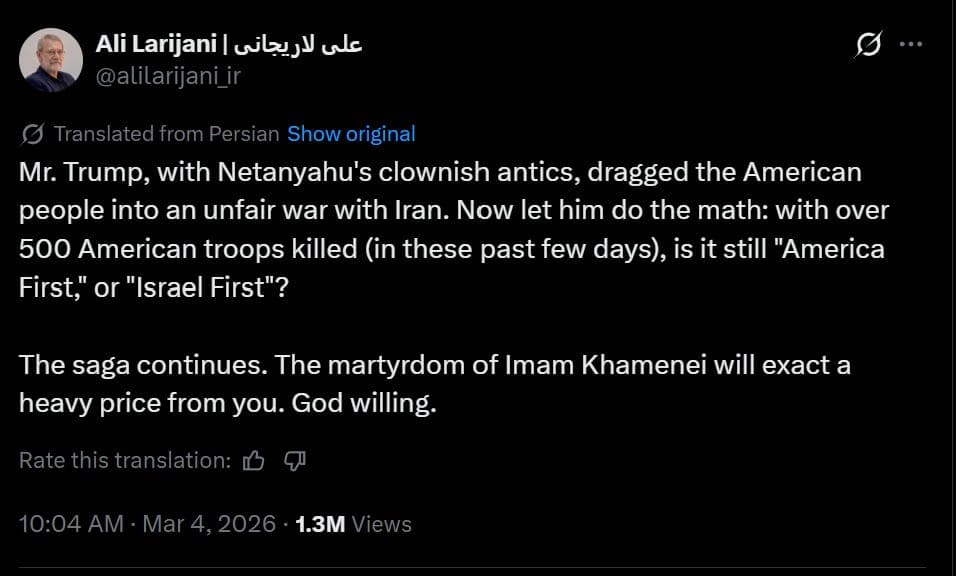

One recurring theme was the portrayal of overwhelming Iranian military success. This message was reinforced both by AI-generated visuals circulating online and by statements from officials. For example, Ali Larijani, the secretary of Iran’s Supreme National Security Council, baselessly claimed that more than 500 U.S. troops had been killed in recent days.

Another recurring narrative framed Iran’s ruling establishment as weakened, humiliated, or in retreat. Several pieces of manipulated or AI-generated content circulated online claiming to show members of the Revolutionary Guard fleeing with their families, officials attempting to escape the country, or symbolic imagery mocking senior leaders. In each case, the material relied on fabricated or altered visuals intended to suggest panic and collapse within the state apparatus.

Other misleading posts identified during the same period were more scattered but often again involved AI-generated or manipulated material. One example involved a video of former Iranian president Mahmoud Ahmadinejad attending a burial ceremony that circulated online as supposed proof he was alive after rumors claimed he had been killed in the first wave of strikes, even though the footage had actually been recorded weeks earlier at an unrelated funeral.

Lastly, as a follow-up to our earlier investigation into the explosion at the primary school in Minab, we published a new analysis that provides additional details about the incident and the circumstances surrounding the strike.

Exaggerating the Impact of Iran’s Attacks

Ali Larijani, the secretary of Iran’s Supreme National Security Council, wrote on X that more than 500 American troops had been killed in the war with Iran in recent days, a claim that was widely repeated by accounts close to the Iranian government. He did not provide any evidence, details about where the deaths occurred, or the identities of those allegedly killed. Available reporting does not support the figure. Official statements from the U.S. military confirmed the deaths of six American service members at the time the claim circulated. In other statements from Iranian officials, casualty figures referred to a combined number of killed and wounded rather than deaths alone. No credible reports or independent sources have indicated that hundreds of U.S. troops were killed. The claim represents a severe exaggeration.

AI-Generated Video Falsely Portrayed an Iranian Missile Strike on Israel

A video circulated on Telegram channels linked to the IRGC under the title “Apocalyptic footage of Iran’s missile barrage.” The clip appeared to be filmed from the window of a building where an Israeli flag was visible, implying that it showed Iranian missiles striking Israeli cities. Several elements in the footage show that it was not real. The rooftops across the neighborhood look nearly identical and repeat the same pattern, suggesting the scene was artificially generated rather than filmed in a real city. No credible news outlets reported or verified the footage. These details indicate the video was created using AI.

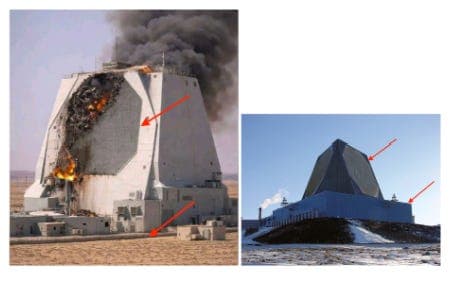

AI-Generated Image Falsely Presented as Damage to a U.S. Radar in Qatar

Left: AI-generated image. Right: authentic satellite image of the radar site

Accounts supportive of the Iranian government circulated an image they said showed the destruction of the U.S. AN/FPS-132 early-warning radar in Qatar after it was struck by an Iranian projectile. Satellite images later showed that the radar site in northern Qatar had indeed been hit and damaged. The picture spreading online, however, does not match the real structure of the radar installation. In the circulating image the antenna appears as a solid octagon, while the actual system consists of circular antenna panels inside an octagonal frame. The radar is also shown sitting directly on the ground, even though the real installation is mounted on top of a large building surrounded by other structures and paved roads. These inconsistencies indicate the image was generated with AI.

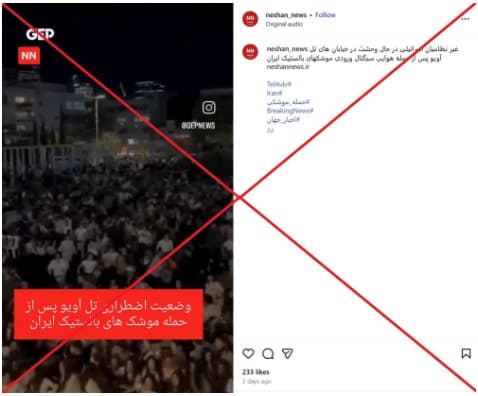

Old Video of Crowd Panic in Tel Aviv Misattributed to Iranian Missile Attacks

A video circulating online was described as showing people in Tel Aviv fleeing in panic after Iranian ballistic missile strikes. The footage, however, is not related to the war. It dates back to April 2025 and was previously broadcast by the Israeli channel ILTV. The scene took place at Habima Square in Tel Aviv, where police arrested a suspect and people nearby mistakenly believed a terrorist attack was underway. The misunderstanding caused panic in the crowd and left more than twenty people injured. The video does not show people reacting to Iranian missiles.

Several videos circulating online were presented as scenes of massive destruction in Israeli cities following Iranian missile strikes. The clips include footage of burning fighter jets, a devastated cityscape described as Jerusalem, and aerial views of a heavily damaged urban area said to be Tel Aviv. These videos do not reflect recent events. Some were generated using AI, while others are older footage taken from unrelated disasters. One clip showing aircraft on fire contains clear visual inconsistencies, including unrealistic aircraft shapes, mismatched object sizes, and firefighters spraying water onto undamaged areas. Another video claiming to show the “total destruction” of Jerusalem is also AI-generated, with uniform debris and distorted building shapes typical of synthetic imagery. A third widely shared video actually comes from the 2023 earthquake in Turkey that devastated the city of Kahramanmaraş. Real damage from Iranian missile strikes has been far more limited. Reports indicate several buildings in Israel were hit, causing casualties and injuries, but the large-scale devastation portrayed in these videos did not occur.

Portraying the Collapse and Humiliation of the Ruling Establishment

AI-Generated Video Misrepresented as a Revolutionary Guard Family Fleeing

A video circulated on social media claiming to show a member of Iran’s Revolutionary Guard fleeing with his family. In the clip, a man in a military uniform and a woman wearing a chador rush toward a car and quickly get inside, while a man filming the scene can be heard saying, “They are escaping.” Several elements in the footage show that it was not real. At the beginning of the video, a strange shadow briefly appears and then disappears, the woman’s face is never clearly visible, and the car’s license plate does not match the format used in Iran. The audio also sounds unnatural, as the voice of the person filming resembles studio audio rather than sound recorded outdoors.

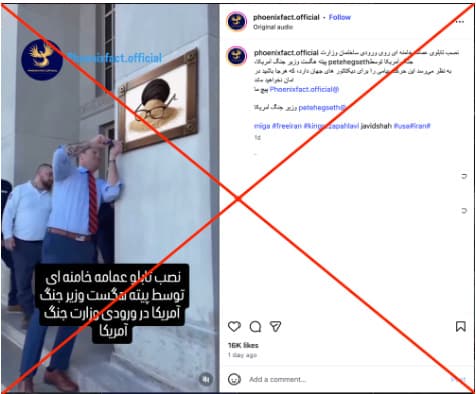

Edited Video Falsely Showed Khamenei’s Turban and Glasses at the Pentagon

An Instagram video suggested that the U.S. defense secretary had installed a sign showing Ali Khamenei’s turban and glasses on a mound of dirt at the Pentagon. The footage had been altered. The original video was recorded in November 2025, when U.S. Defense Secretary Pete Hegseth installed a new “Department of War” sign at the Pentagon’s main entrance, replacing the long-standing “Department of Defense” plaque. In the version shared online, the original sign was digitally replaced with the image of the turban and glasses, creating the false impression that such a display had taken place.

AI-Generated Video Falsely Presented as IRGC Members Fleeing to Afghanistan

A short video shared online captured masked individuals walking outdoors while holding passports toward the camera. The footage was presented as members of Iran’s Revolutionary Guard and associates of Ali Khamenei fleeing to Afghanistan after changing their clothes. The passports visible in the video contained clear irregularities. The text appeared as distorted, unreadable characters, while only the “Allah” emblem was clearly recognizable. The layout also did not match the design of official Iranian passports, including the placement of the emblem relative to the text. These flaws indicated the video was generated with AI and did not document a real event.

Further Misleading Content

AI-Generated Video Falsely Claimed to Show Bombing in Tehran

AI-Generated Video Falsely Claimed to Show Bombing in Tehran A video circulated widely on social media claiming to show Tehran being bombed. The clip spread across Persian and non-Persian accounts, often presented as footage from inside the city. In the video, explosions appear along a street while a voice nervously tells someone to “go inside,” creating the impression that it was recorded during an attack. Factnameh’s review found the video was not real. Several details exposed it as fabricated: the shop signs were unreadable, the cars did not match models commonly seen in Iran, and the missiles moved in impossible ways, including appearing to travel upward after impact. These inconsistencies showed the footage had been created using AI.

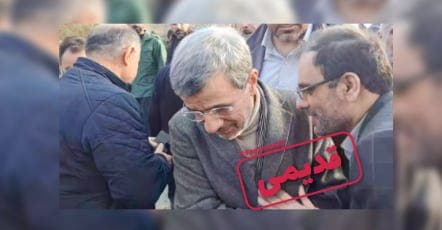

Old Video of Ahmadinejad at a Funeral Resurfaced After Rumors of His Death

Mahmoud Ahmadinejad, Iran’s former president, appears among mourners at a burial ceremony in a video that was presented as footage of him attending the funeral of his bodyguards after the reported attack on his home. The clip circulated amid rumors that Ahmadinejad had been killed after his residence was struck during the initial hours of the war. The scene, however, is unrelated to those events. The video was recorded in January 2026 in Shirgah, Savadkuh, where Ahmadinejad attended the funeral of the mother of Seyed Hassan Mousavi, who served as his chief of staff during his presidency.

False Claim Circulated That Lamine Yamal Offered Condolences for Khamenei’s Death

A claim circulated online saying Lamine Yamal, the Barcelona football player, had expressed condolences for the death of Ali Khamenei. The claim spread widely in Persian as well as English, Arabic, and French posts. No such message appears on Yamal’s official accounts on Instagram, TikTok, or YouTube, where he rarely posts about political or social issues. The claim appears to have originated from a Facebook fan page using his name. The page itself states that it is not the footballer’s official account and is only a fan page.